AI-Generated CI/CD Pipelines: The Next Big Thing?

The foundation of modern software delivery is the Continuous Integration/Continuous Delivery (CI/CD) pipeline—the automated workflow that builds, tests, and deploys code changes. However, as applications become more complex, so do the pipelines that manage them. Enter Artificial Intelligence (AI), which is transforming these fixed, manual scripts into intelligent, self-optimizing, and even

self-generating systems. This shift from simple automation to intelligent automation is arguably the biggest revolution in DevOps since its inception.

What Are AI-Generated CI/CD Pipelines?

Traditionally, CI/CD pipelines are defined by developers using YAML or other configuration languages. They are a set of deterministic, sequential, or parallel steps (Build $\rightarrow$ Test $\rightarrow$ Deploy).

AI-Generated CI/CD Pipelines, or more broadly, AI-Powered CI/CD, refer to systems where machine learning (ML) models and generative AI are integrated to autonomously configure, optimize, execute, and monitor the pipeline workflow itself.

Instead of a developer manually writing a new test or a deployment script, the AI:

- Generates Code/Config: Writes the initial pipeline configuration (e.g., a .gitlab-ci.yml file) or generates missing components like unit and integration tests based on a high-level goal and code context.

- Predicts and Adapts: Learns from vast amounts of historical data (build logs, test results, deployment metrics) to make real-time, non-deterministic decisions, such as which tests to run, the optimal resource allocation, or whether to pause a deployment.

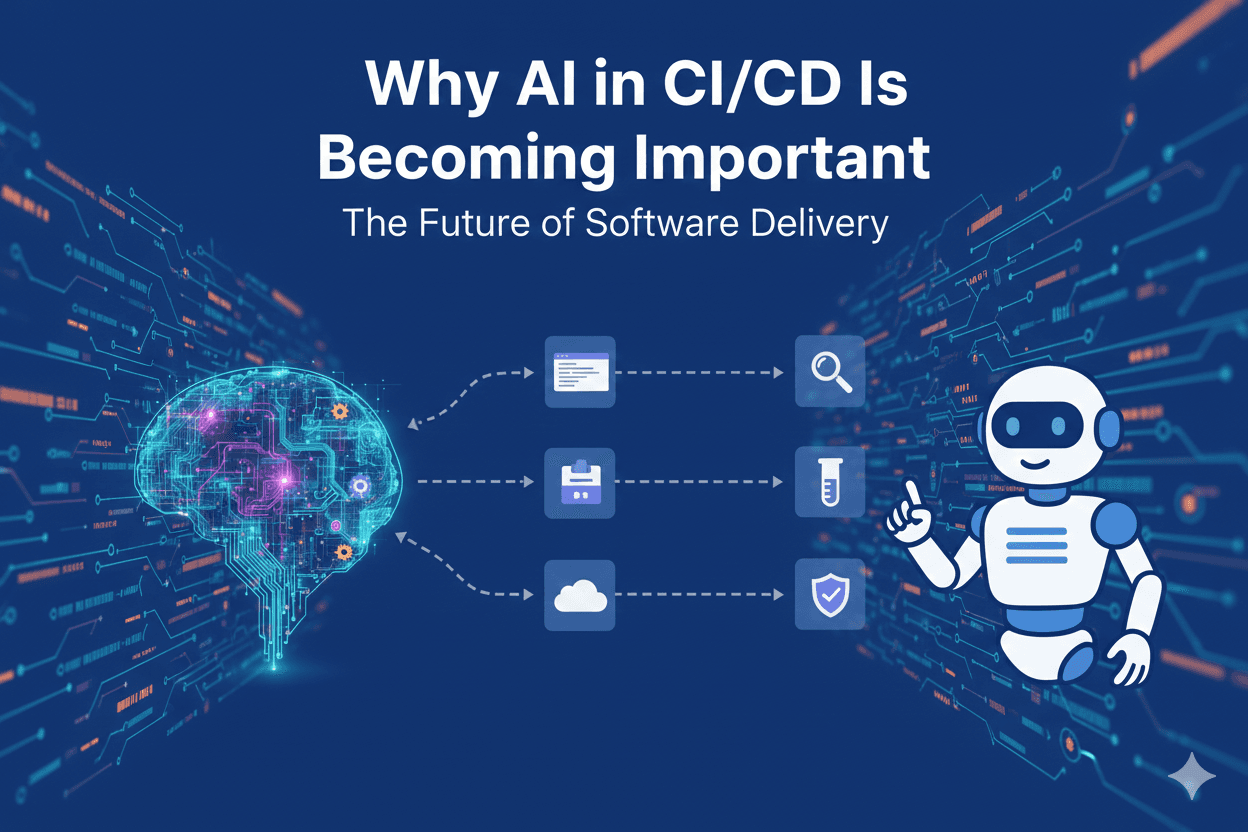

Why AI in CI/CD Is Becoming Important

The pressure on engineering teams in the mid-2020s has created bottlenecks that traditional automation struggles to solve:

- Release Speed vs. Reliability Trade-off: Teams are expected to release features faster, but complex microservices architectures increase the risk of failed deployments and downtime. AI offers a way to have both speed and predictive reliability.

- Cognitive Load: Even with automation, developers spend significant time debugging flaky tests, troubleshooting build failures, and manually overseeing deployments. AI shifts the burden of repetitive, context-switching tasks away from human engineers.

- Pipeline Complexity and Maintenance: As the number of services and environments grows, maintaining hundreds of complex pipeline-as-code files becomes a major overhead. AI can self-optimize and generate these configurations, simplifying maintenance.

- Cost Efficiency: Traditional pipelines often run all tests and builds, regardless of the code change, wasting cloud compute resources. AI optimizes these steps, leading to significant cost savings.

How AI Generates CI/CD Pipelines

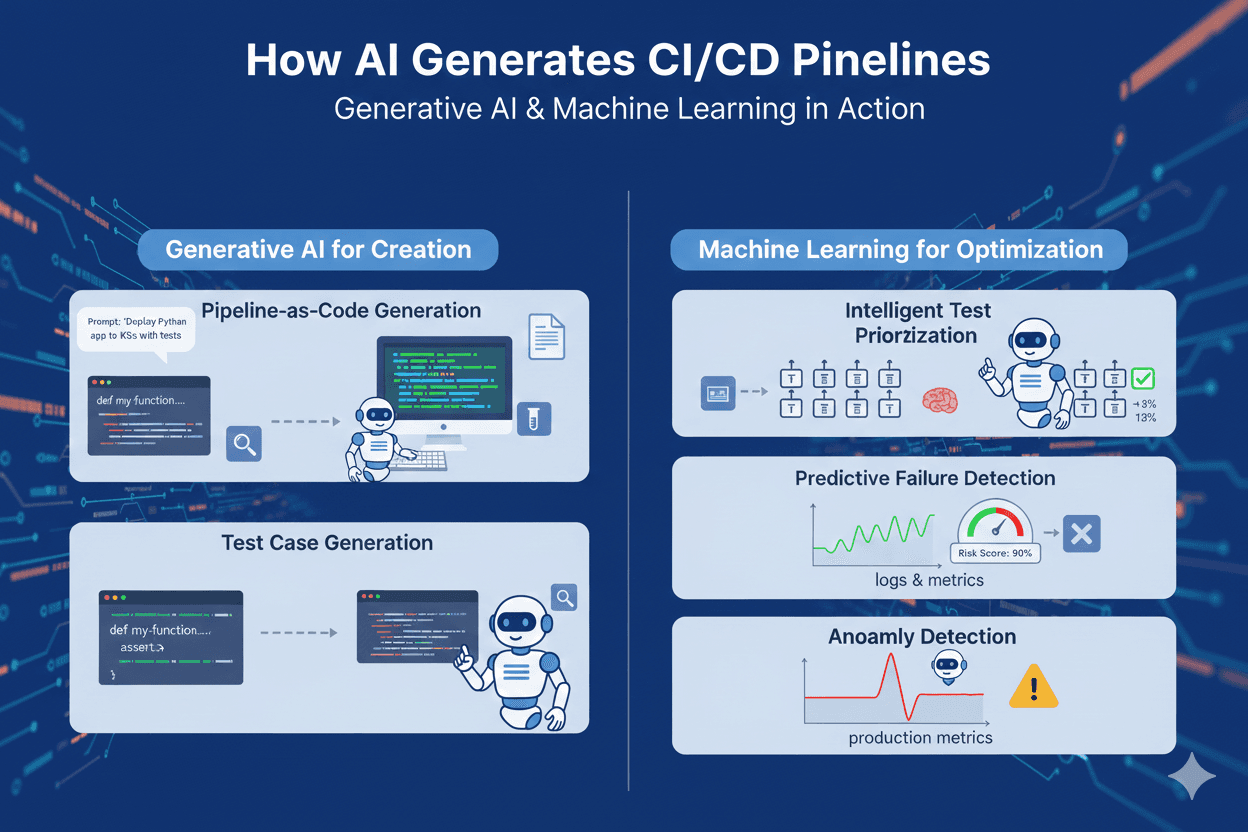

The "generation" aspect primarily uses Generative AI and Machine Learning across different phases:

Generative AI for Pipeline & Code Creation:

- Pipeline-as-Code Generation: Large Language Models (LLMs) are trained on vast public and proprietary CI/CD configuration files (like GitHub Actions YAML or Jenkinsfiles). An engineer can use a simple prompt ("Deploy my Python microservice to Kubernetes in three regions with a full test suite") and the AI will generate the initial, functional configuration file.

- Test Case Generation: AI generates unit and integration tests for new or modified code. This dramatically increases test coverage and reduces the manual effort of writing boilerplate tests.

Machine Learning for Optimization and Prediction:

- Intelligent Test Prioritization (Test Impact Analysis): ML models analyze a code change (the diff) against historical test data. They then determine the minimum set of tests required to validate the change, skipping irrelevant tests to cut build and test times by 30-50%.

- Predictive Failure Detection: Models are trained on logs, metrics, and deployment history. They can assign a risk score to a commit or deployment before it runs, predicting with high accuracy whether it will fail in production and triggering additional checks or a pause.

- Anomaly Detection: AI monitors running pipelines and production metrics to detect unusual behavior (e.g., a sudden increase in memory usage or a spike in latency) that a static threshold would miss.

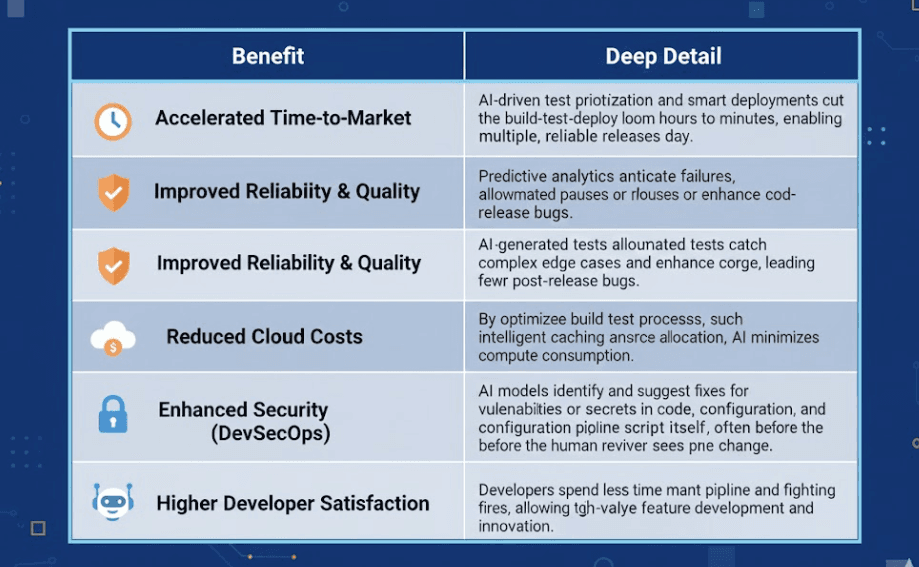

Benefits of AI-Generated CI/CD Pipelines

Real-World Tools Using AI in CI/CD (2025–2026)

The market is rapidly shifting toward CI/AI:

- GitHub Actions / Copilot: GitHub Copilot extends its generative capabilities into DevOps by assisting with writing complex GitHub Actions YAML workflows and generating documentation or release notes based on the commit history.

- Harness.io: A leader in AI-driven delivery, Harness uses ML for Continuous Verification, autonomously comparing new deployment metrics against baseline data to verify release success and automatically roll back on failure.

- Launchable: Focuses specifically on Test Intelligence, using ML to dynamically select and prioritize only the most relevant tests for a given code change, drastically reducing CI/CD execution time.

- AWS CodeCatalyst: Integrates AI-powered tools for suggesting project configurations, complete with pre-configured build, test, and deploy stages for common cloud-native applications.

- ML-Powered Observability Platforms (e.g., Datadog, Dynatrace): While not CI/CD tools, their integration provides the critical data layer. They use AI to perform automated Root Cause Analysis (RCA) and feed anomaly detection alerts directly back into the CI/CD system to trigger a "self-healing" response.

Use Cases of AI-Generated CI/CD Pipelines

- Smart Testing in Fintech: A finance application, with thousands of compliance tests, uses an AI model to analyze a small change in the payments microservice. The AI determines that only 5% of the total test suite is relevant, cutting the test execution time from 90 minutes to 5 minutes, without compromising compliance.

- Adaptive Canary Rollouts for E-commerce: During a major holiday sale, an e-commerce platform rolls out a new recommendation engine feature to 1% of users. The AI monitors real-time transaction latency. If the AI detects a slight, anomalous spike in latency among the canary group, it automatically pauses the rollout and rolls back the change before a human is alerted, preventing lost revenue.

- Autonomous Incident Remediation: A container deployment fails due to a memory limit error on a Kubernetes cluster. The AI-enabled pipeline receives the error log, identifies the root cause, and, instead of failing, automatically edits the Kubernetes manifest to increase the memory limit and retries the deployment, thus self-healing the pipeline.

Challenges & Limitations

Despite the transformative benefits, the path to AI-driven CI/CD is not without hurdles:

- Data Quality and Volume: AI models are only as good as their training data. Collecting, cleaning, and labeling years of historical build logs, test results, and deployment metrics is a significant, time-consuming prerequisite. Biased or incomplete data leads to flawed automation.

- Trust and Explainability (XAI): When an AI model decides to skip a critical test or roll back a deployment, engineers need to understand why. The "black-box" nature of some ML models can lead to a trust gap and engineer resistance. Explainable AI (XAI) is crucial to overcome this.

- Complexity of Integration: Integrating AI tools into an existing, often heterogeneous (Jenkins, GitLab, ArgoCD, etc.), CI/CD environment requires advanced platform engineering skills and significant refactoring.

- Security Risks: Publicly-trained Generative AI models could pose risks if proprietary code or configuration is exposed during prompt engineering. Secure, private model training is essential.

The Future of CI/CD: Autonomous Pipelines

The ultimate destination for AI in DevOps is the Autonomous Pipeline or Autonomous DevOps.

This future moves beyond simply optimizing steps to having a system that can self-manage, self-heal, and self-optimize with minimal or no human intervention.

Key capabilities of this autonomous future include:

- Dynamic Pipeline Generation: Pipelines that write and update their own configuration files based on changes in application code, microservice dependencies, or environment changes.

- Reinforcement Learning (RL): The system continuously learns from the success or failure of its previous decisions (e.g., a successful rollout yields a 'reward'). This continuous feedback loop refines the pipeline's deployment strategy over time, making it smarter with every release.

- Proactive Security: AI-driven threat modeling that anticipates and automatically patches vulnerabilities before they are known exploits.

In this model, the DevOps engineer's role shifts from managing execution to governing the system: defining high-level policies, auditing AI decisions, and focusing on complex architectural challenges.

Conclusion

- AI-Generated and AI-Powered CI/CD pipelines are not a distant concept—they are the present-day evolution of software delivery. The early 2025–2026 period is defined by a transition from static automation to intelligent, adaptive systems that can predict, optimize, and self-correct. While challenges like data quality and the need for explainability remain, the competitive advantage gained from faster, cheaper, and more reliable releases makes the adoption of AI in CI/CD an inevitable and necessary next step for any modern engineering organization. The next big thing is here, and it’s intelligent.

Written by