Introducing Gemini Embedding 2: Google’s Next-Gen Multimodal Embedding Model

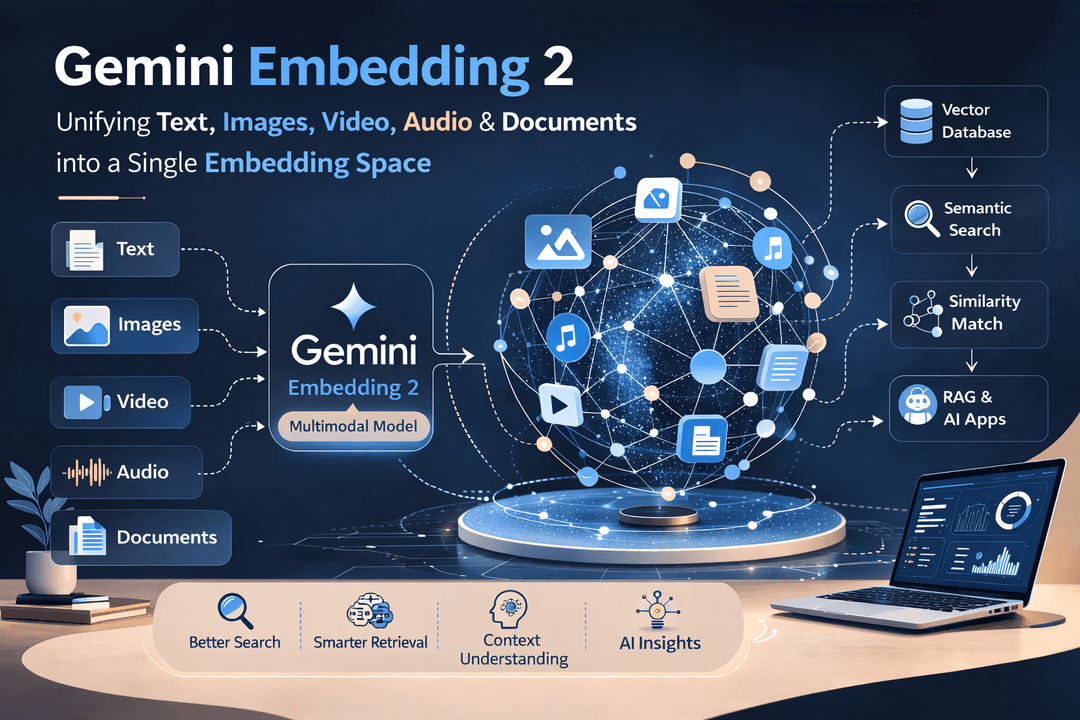

Artificial Intelligence continues to evolve rapidly, and embeddings remain a foundational component for many AI applications such as search, recommendation systems, and Retrieval-Augmented Generation (RAG). Recently, Google introduced Gemini Embedding 2, a new multimodal embedding model designed to bring multiple types of data into a single embedding space.

This advancement allows developers to work seamlessly with text, images, videos, audio, and documents using a unified representation, opening new possibilities for building intelligent and context-aware systems.

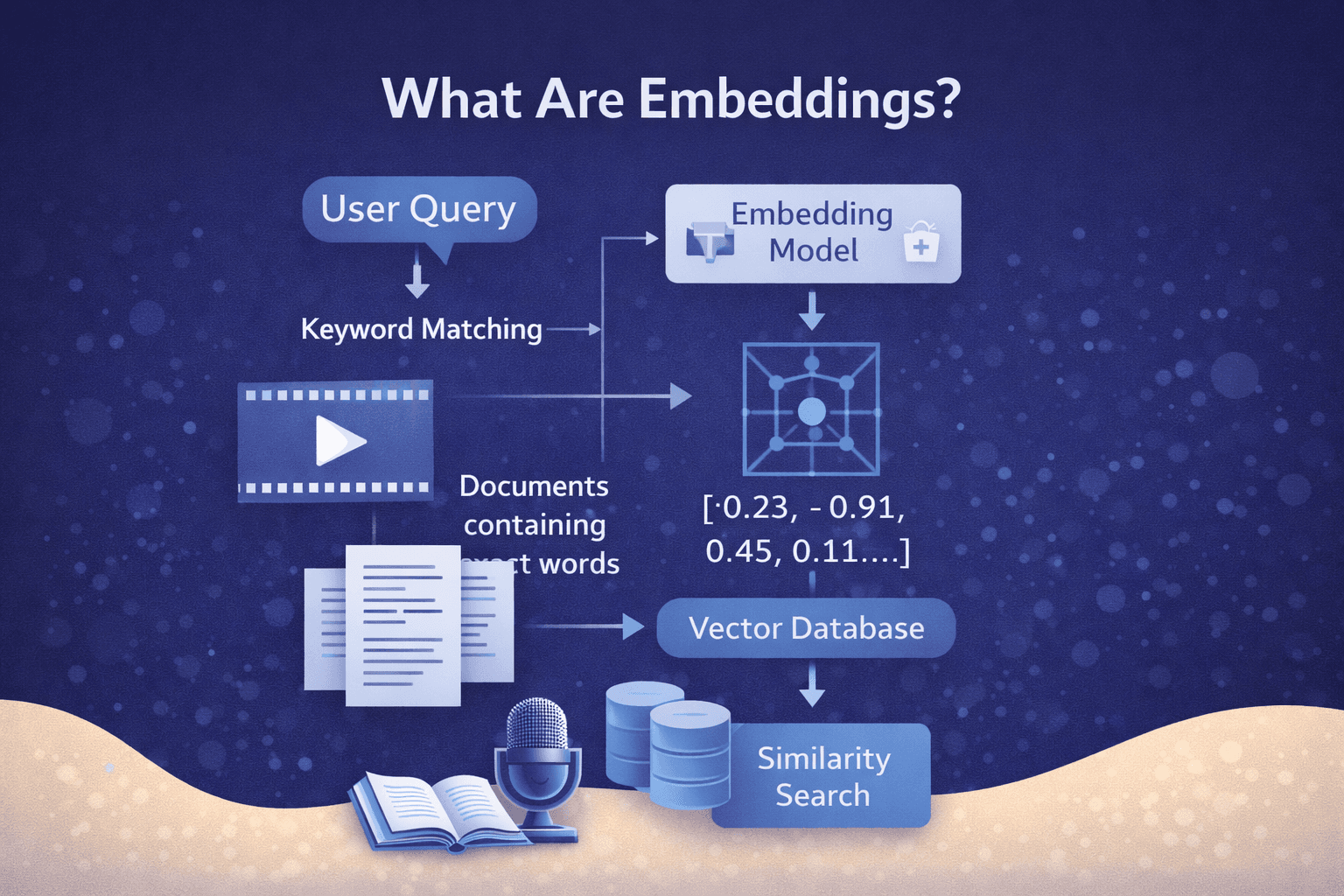

What Are Embeddings?

Embeddings are numerical vector representations of data that capture semantic meaning. Instead of treating information as plain text or raw media, embedding models convert content into vectors that allow machines to understand similarity and relationships.

For example, in a search system, embeddings help determine that a query like “AI research papers” is closely related to documents about machine learning publications.

Embeddings are widely used in:

- Semantic search

- Recommendation systems

- Question answering

- Document retrieval

- Retrieval-Augmented Generation (RAG)

Introducing Gemini Embedding 2

Gemini Embedding 2 extends the capabilities of previous embedding models by supporting multimodal data. This means the model can generate embeddings for different data formats and represent them in the same vector space.

This unified approach allows developers to perform similarity searches across multiple modalities.

For example:

- Searching images using text queries

- Finding relevant videos based on audio transcripts

- Retrieving documents using visual inputs

- Matching audio clips with textual descriptions

By embedding all modalities into a shared representation, AI systems can understand relationships between different types of information.

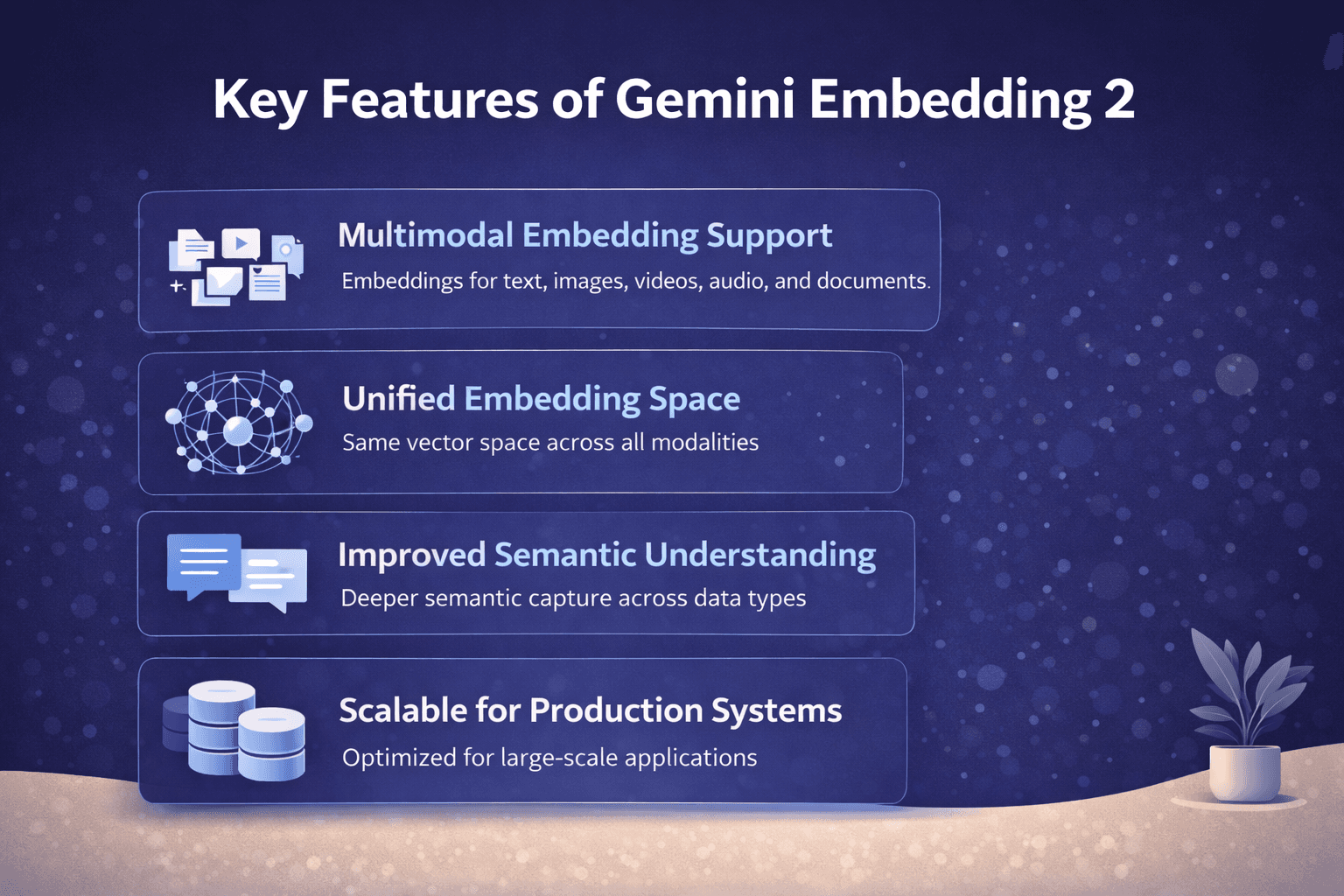

Key Features of Gemini Embedding 2

1. Multimodal Embedding Support

The model supports embeddings for:

- Text

- Images

- Video

- Audio

- Documents

This allows cross-modal understanding and retrieval.

2. Unified Embedding Space

All modalities are mapped into a shared embedding space, enabling similarity comparison between different data types.

3. Improved Semantic Understanding

Gemini Embedding 2 is designed to capture deeper semantic relationships across media formats, improving search and retrieval quality.

4. Scalable for Production Systems

The model is optimized for large-scale applications, making it suitable for enterprise systems that process vast amounts of multimedia content.

Potential Use Cases

Multimodal Search Engines

- Users can search across videos, images, and documents using natural language queries.

Content Recommendation

- Streaming platforms can recommend videos based on user interaction with text descriptions or images.

Knowledge Retrieval Systems

- Organizations can build systems that retrieve relevant documents, presentations, and multimedia content.

AI Assistants

- Assistants powered by embeddings can better understand queries involving multiple media formats.

Impact on AI Development

The introduction of Gemini Embedding 2 highlights the growing importance of multimodal AI systems. As AI applications increasingly deal with diverse data types, unified embedding models will become critical infrastructure.

By enabling developers to process and retrieve information across text, visuals, and audio, Gemini Embedding 2 simplifies the creation of next-generation AI tools.

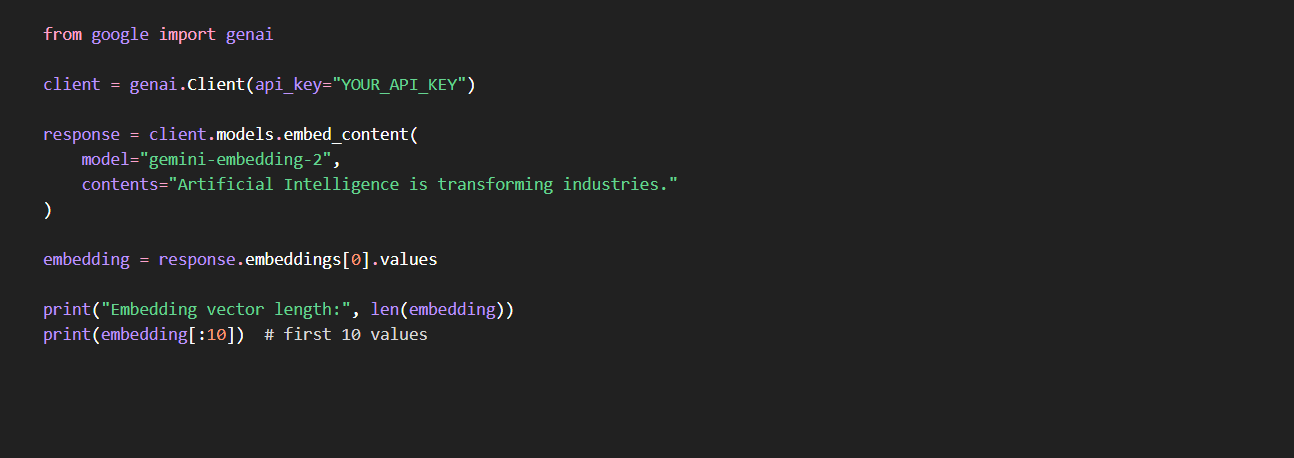

Using Gemini Embedding 2 with the Gemini API

Developers can generate embeddings using models provided by Google through the Gemini API. These embeddings can then be stored in vector databases and used for semantic search, recommendation engines, or Retrieval-Augmented Generation (RAG).

The Gemini Embedding 2 model allows embeddings for multiple data types including text, images, audio, and documents.

Example: Generate Text Embeddings (Python)

What this does

- Sends text to the Gemini Embedding model

- Converts the text into a numerical vector

- Returns a vector that represents the semantic meaning of the content

You can store this vector in a vector database such as Pinecone, Weaviate, or FAISS.

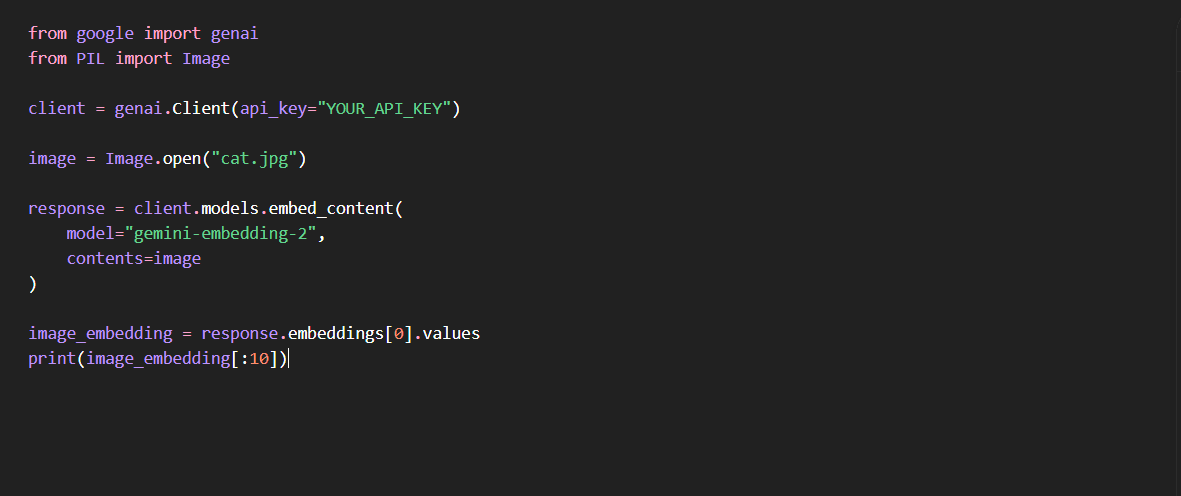

Example: Generate Image Embeddings (Python)

This allows systems to perform:

- image similarity search

- visual content retrieval

- cross-modal search (text → image)

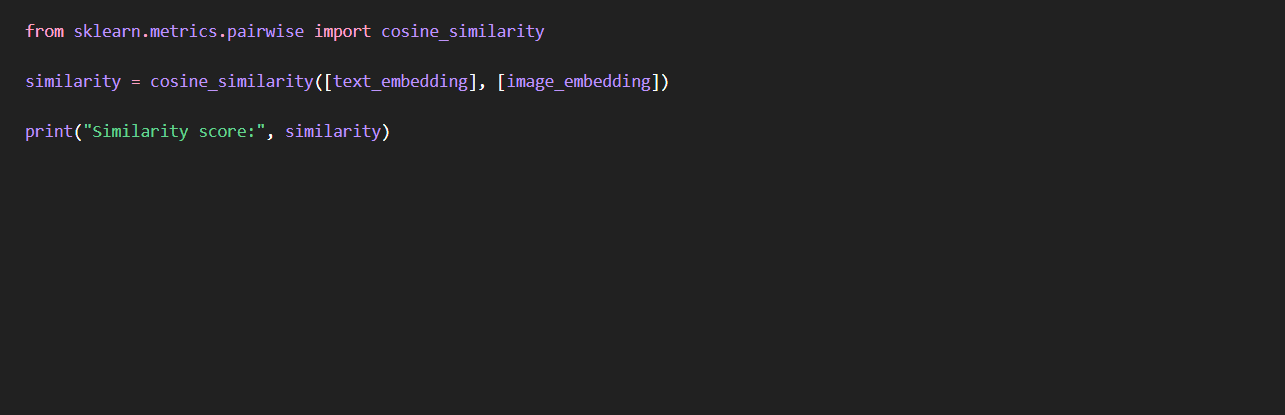

Example: Multimodal Search

You can compare embeddings from different modalities.

Example workflow:

- Convert images into embeddings

- Convert user text query into embedding

- Compute similarity

How Cognyx Can Help

Organizations often struggle to move from AI experiments to production systems. This is where Cognyx can help.

1. Multimodal AI System Development

Cognyx helps companies build platforms that integrate:

- text embeddings

- image embeddings

- audio processing

- document retrieval

using modern AI models like Gemini.

2. Production-Ready RAG Systems

Many companies want to build AI assistants over internal data.

Cognyx can build systems that include:

- Gemini embedding pipelines

- vector database architecture

- RAG pipelines

- enterprise search systems

3. Scalable AI Infrastructure

Handling large multimodal datasets requires infrastructure such as:

- embedding pipelines

- vector indexing

- real-time retrieval systems

Cognyx helps design scalable AI architectures that support millions of embeddings.

4. AI Product Development

Startups and enterprises can leverage Cognyx to build:

- multimodal search engines

- AI copilots

- recommendation systems

- intelligent document platforms

Final Thoughts

The introduction of Gemini Embedding 2 marks a significant step toward unified multimodal AI systems. By enabling text, images, video, audio, and documents to exist in the same embedding space, developers can build smarter search engines, AI assistants, and knowledge systems.

Platforms like Cognyx can help organizations translate these capabilities into real-world AI products by designing scalable architectures and production-ready solutions.

Written by