Top 5 AI Security Practices Every Organization Must Follow in 2026

Artificial Intelligence is no longer experimental—it’s mission-critical. From autonomous decision-making systems to customer-facing copilots, AI is deeply embedded into modern business infrastructure. However, as AI capabilities grow, so do the attack surfaces. Securing AI systems is no longer optional—it’s a fundamental requirement for trust, compliance, and long-term viability.

In 2026, AI security goes far beyond traditional cybersecurity. It includes protecting data pipelines, safeguarding models from manipulation, ensuring ethical outputs, and defending against emerging threats like prompt injection and model inversion attacks.

This blog explores five essential best practices every organization must adopt to secure AI systems effectively.

1. Secure the Data Pipeline End-to-End

AI systems are only as secure as the data they are trained on and consume in real time. Data poisoning and leakage remain among the most dangerous attack vectors.

Key Risks:

- Data poisoning attacks: Malicious data injected during training to manipulate model behavior

- Sensitive data leakage: Exposure of personal, financial, or proprietary data

- Untrusted data sources: Compromised APIs or datasets

Best Practices:

- Implement data validation and sanitization at ingestion points

- Use data provenance tracking to verify data origin

- Apply encryption at rest and in transit

- Restrict access using role-based access control (RBAC)

- Regularly audit datasets for anomalies and bias

Pro Tip:

Adopt a “zero-trust data pipeline” where no data source is assumed safe by default.

2. Protect Models Against Adversarial Attacks

AI models can be manipulated through carefully crafted inputs designed to deceive them. These attacks are especially dangerous in applications like finance, healthcare, and autonomous systems.

Key Risks:

- Adversarial examples: Inputs modified to trick the model

- Model extraction: Attackers replicate your model via API queries

- Model inversion: Reverse-engineering sensitive training data

Best Practices:

- Use adversarial training to improve model robustness

- Limit API exposure with rate limiting and authentication

- Implement query monitoring and anomaly detection

- Add noise and differential privacy techniques

- Regularly test models with red-teaming exercises

Pro Tip:

Think of your model as an API under attack—monitor it like you would a production system.

3. Implement Strong Access Control & Identity Management

AI systems often integrate multiple services—data lakes, APIs, model endpoints, and dashboards. Without strict access control, these become easy entry points.

Key Risks:

- Unauthorized access to model endpoints

- Insider threats

- Credential leaks

Best Practices:

- Enforce multi-factor authentication (MFA)

- Use least privilege access principles

- Rotate API keys and secrets regularly

- Implement identity-aware proxies

- Monitor access logs for suspicious behavior

Pro Tip:

Integrate AI systems with your organization’s Identity and Access Management (IAM) framework for centralized control.

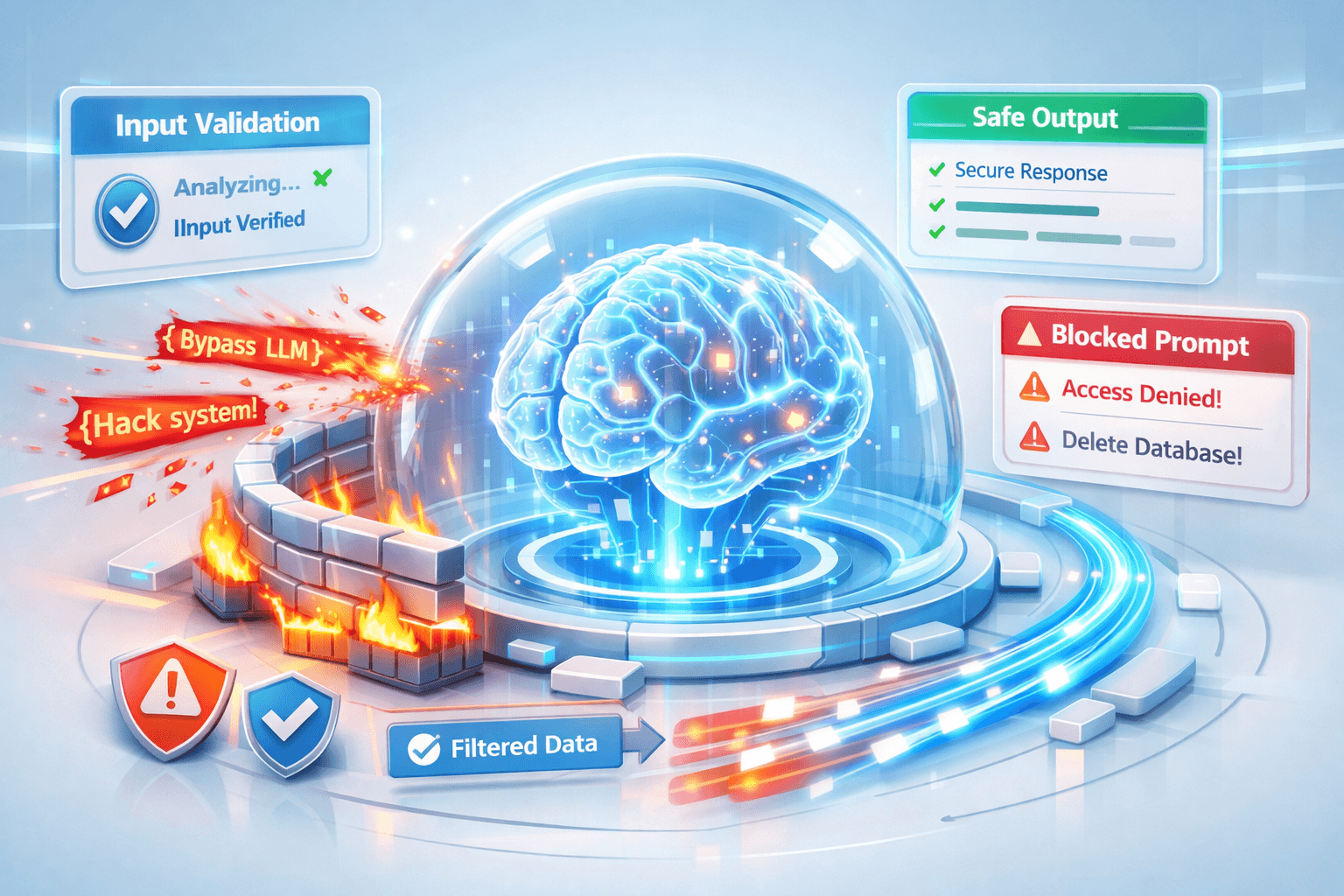

4. Defend Against Prompt Injection & LLM-Specific Threats

Large Language Models (LLMs) introduce new vulnerabilities that traditional systems never faced. Prompt injection is one of the most critical threats in generative AI.

Key Risks:

- Prompt injection attacks: Malicious instructions override system behavior

- Data exfiltration via prompts

- Tool misuse in agent-based systems

Best Practices:

- Use input filtering and prompt validation layers

- Implement output moderation and guardrails

- Separate system prompts from user inputs securely

- Limit tool access via sandboxed execution environments

- Use policy engines to enforce allowed behaviors

Pro Tip:

Treat prompts as untrusted input—just like user-generated content in web apps.

5. Continuous Monitoring, Logging & Incident Response

AI systems are dynamic—they evolve with data, usage patterns, and updates. Static security measures are not enough.

Key Risks:

- Undetected model drift or anomalies

- Delayed response to attacks

- Lack of visibility into AI decision-making

Best Practices:

- Implement real-time monitoring dashboards

- Log all model inputs, outputs, and decisions

- Use AI observability tools for explainability

- Set up automated alerts for anomalies

- Develop a dedicated AI incident response plan

Pro Tip:

Adopt “AI observability” as a discipline—similar to DevOps monitoring but tailored for models.

Bonus: Governance, Compliance & Ethical AI

Security is not just technical—it’s also regulatory and ethical.

Focus Areas:

- Compliance with global standards (GDPR, AI regulations)

- Bias detection and fairness audits

- Transparent model documentation (Model Cards)

- Human-in-the-loop systems for critical decisions

Final Thoughts

Securing AI systems in 2026 requires a multi-layered approach that combines cybersecurity, data governance, and AI-specific safeguards. Organizations that proactively invest in AI security will not only reduce risks but also build trust with users and stakeholders.

The future of AI is powerful—but only if it is secure, transparent, and resilient.

Written by